After 20 hours of building a VC sourcing tool with Claude, it sourced companies that looked identical to a list other firms would share and identified seed-funded companies that were about to sign undisclosed Term Sheets at massive prices from Tier 1 firms.

This was wildly more successful than I had dreamt of.

I had simple goals of just pulling signals out of a huge corpus of data that wasn’t being used, but as I pulled on the thread, the workflow got deeper and deeper. At this point, I've built a system that is part of my daily workflow and the single pane of glass I work in.

It started with a question: could Claude source interesting companies for me to spend time with?

I do sourcing every day. It's a core part of the job. But there are signals I don't have the bandwidth to review. I can't read every blog, every product launch, every filing. We have access to paid data sources — but there's more data in those than any person can actually process. And many of these sources have very small signals compared to the noise. Like all humans, I get tired. My attention fades after the thirtieth company profile of the day. The machine doesn't have that problem.

To be clear: I don’t believe automated sourcing is the future of venture, but it is a new tool in a toolkit. I still believe human relationships are the best source of signal in early-stage venture. A founder referral from someone you trust is worth more than any dataset. But I’ve known I was leaving data on the table — public signals that are valuable if you could wade through the noise and sheer volume of data. This experiment with Claude was about augmenting, not replacing.

It was also, honestly, about curiosity. VCs are taking pitches every day from AI companies, talking about token budgets and inference costs, sitting in board meetings, opining about what's being built. How can you do that credibly if you haven't actually used the technology? Not just read about it — used it. Built something with it. Hit the walls. That felt like the bare minimum. And the barrier is absurdly low: it's a hundred dollars a month and some intellectual curiosity. Two things a VC should have plenty of.

WHAT I BUILT

Over about two weeks, the initial germ of an idea grew into a full-blown system called RUDIE, because as The Clash wrote in 1979, ‘Rudie Can't Fail.’

Rudie is actually a collection of agents:

ATLAS is the universe-building agent. It pulls from dozens of public sources daily and writes everything into a structured database — no opinions, no scoring, just clean capture. For my purposes, it's focused on companies that have raised less than $10 million. The database currently has over 112,000 companies.

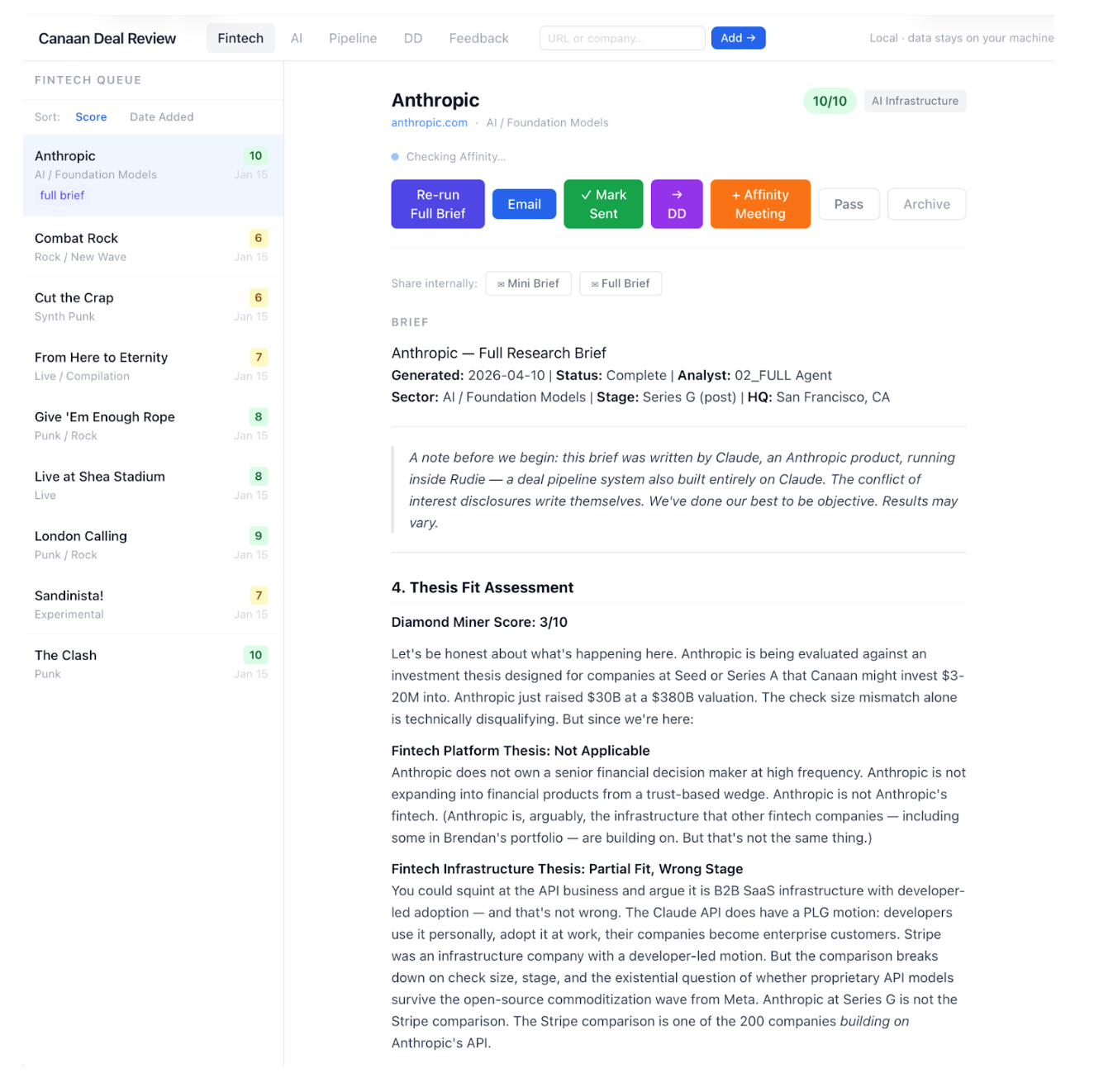

Diamond Miner is the agent that populates everything in ATLAS. It does lightweight web research and scores each company against my theses. We reviewed what surfaced and what didn't, and fed that back into the prompts. It got better. Then we added a second pass to enrich the data more thoroughly (use more tokens) and re-score. The two-pass approach was important — the first pass is cheap and fast to narrow the field, the second pass goes deeper on the ones that show a signal.

BRIEFING AGENTS

Mini Briefs write up a short profile on the top-scoring companies — enough for me to understand the product, the founders' backgrounds, the competitive landscape, and the financing history. These come out daily. This is my daily triage.

Full Briefs go deeper when something catches my eye. Deep research on the founders — their posts, their talks, how they think. Competitive analysis. Differentiation. Connection intelligence from our CRM for warm introductions. These are the basis for outreach.

Strummer is the UI layer. Living in Claude's chat interface doesn't work for a daily workflow, so Claude built me a local web app. It reads and writes to our CRM, shows the daily queue of scored companies and briefs in a single screen, and lets me triage quickly. The system learns from my decisions — what I promote, what I pass on, what I want to dig into.

The whole system runs overnight. I open it each morning with a fresh queue.

All in, the system is about 36,000 lines of code and content — 21,000 lines of Python, a 9,000-line knowledge base and prompt library, a web frontend, all built with Claude Code. It runs locally on my machine. No SaaS fees beyond the tools themselves.

THE HUMAN TOUCH

While all of those agents and scripts are super effective, it became quickly apparent that the tool is only as good as the data you give it. In this case, there are two critical sources: how I think and a universe of companies.

Claude is only as good as the context you give it. If you just say "find me interesting seed-stage companies," you'll get generic output. The system has no idea what interesting means to you. So I built a knowledge base — my investment thesis, what I believe, what I think is overhyped, what's in my anti-portfolio and why I passed (and where I might have been wrong), what's in my portfolio, and what I've learned, background on my firm, and how we think. It's about 9,000 lines of structured markdown — thesis, scoring rubrics, agent instructions, the whole framework. That knowledge base is the soul of the whole system. Without it, you have a fancy search engine. With it, you have something that actually thinks about companies the way I do.

Second, Claude is only as good as the data it has access to. Web search, blogs (it can read all of them), spreadsheets, SEC filings, Product Hunt, GitHub trending, Hacker News, VC portfolio pages, Twitter, LinkedIn. You need to feed it broadly. You also can't expect it to be your only sourcing channel — the best seed-stage companies often don't show up in any public data yet. But as a complement to the human network, it's genuinely useful.

Finally, Rudie has no insight into how companies are actually performing. Given that Rudie is limited to the public domain, it has no insight into which companies are experiencing inflection or massive churn, etc. There is no substitute for human relationships, which contain the strongest sources of signal.

TWO MOMENTS THAT CONVINCED ME IT WORKS

Early on, I had a batch of scored deals coming out of Diamond Miner — maybe twenty companies, ranked and briefly annotated. I sent them to an associate without context. Didn't say where the list came from. His response: "Which fund sent that list to you? Good list." He assumed a partner at another firm had curated and sent these over. That's a quality benchmark you can't manufacture.

Later, I sent the highest-scoring company from one run — the system's top pick out of thousands — to a friend at another fund. He came back fast: great company, and by the way, they had just closed a Series A at a $150 million post-money valuation from a tier one firm. That round wasn't in any dataset the system had access to. It hadn't been announced. Diamond Miner saw the structural pattern in the public data and scored its way to the answer before the market had the news.

That was the moment I stopped thinking of this as an experiment.

WHAT I LEARNED ALONG THE WAY

Building this taught me more about working with AI than any pitch deck or board meeting ever could. A few things that surprised me:

Memory management is a real skill. Context windows are large but not infinite. Learning how to structure information so the model has what it needs without drowning in noise — that's not trivial. It's closer to database design than it is to writing a prompt.

Model selection matters more than you'd think. I started building with Opus and was getting rate-limited like crazy. So I moved to Sonnet because it's faster and cheaper. It wasn't enough. For complex scoring and research tasks, Sonnet would go down rabbit holes—confidently heading in the wrong direction. Opus handled the complexity. Knowing when to use which model — Sonnet for high-volume ingestion, Opus for judgment calls — is a skill you only learn by building. It's also a meaningful cost consideration when you're running agents at scale.

You will hit the walls. I was getting rate-limited two or three times a day during the original build. You're building on someone else's infrastructure, and that has real constraints. Some of those constraints loosened while I was building — Anthropic shipped improvements to context handling, tool use, and the Claude Code experience during the weeks I was working on this. The platform is genuinely moving fast.

Permissions and flow state matter. This might sound small, but it's not. The ability to let Claude operate with bypass permissions — creating files, running code, and iterating without me having to hit "approve" on every action — was critical. If I'd had to confirm every file write and every shell command, I would not have built this. The flow state of working alongside an AI agent, where it just does the thing, and you review the output, is fundamentally different from the interrupt-driven experience of approving each step.

WHAT I THINK THIS MEANS

I don't think what I built is revolutionary. I assume most venture firms either have something like this today or will within six months (they should!). It's table stakes now. But it's also incredibly cool, and building it taught me things best learned from experience.

The broader question is what it means for software. There's been a ton of ink spilled on Twitter about this, but I’ll add my thoughts based on building Rudie.

The system I built is perfect for me. It encodes my thesis, my preferences, and my workflow. I have no desire to make it good enough to distribute — even sharing it internally required meaningful customization. A software vendor would have tried to sell something like this to my firm a year ago at a significant price. But the thing is, we probably wouldn't have bought it. Too hard to prove the value out of the gate. Too much customization is needed to make it actually work for our specific thesis and process. The ROI would have been impossible to demonstrate in a sales cycle.

And yet the value is enormous.

Claude Code and others are meaningfully expanding the market for software. In the case of Rudie, there is now more deal-sourcing software in the world today because the barriers to creation and cost have dropped. However, that value isn't accrued to any software vendor; it's accrued to Anthropic. They charge me for Claude, and I'll pay every month even when (not if) their rates go up - it's core workflow now. The application-specific value sits on top, and I built that part myself.

I don't think most people will roll their own. Most teams don't have someone willing to spend twenty hours learning how context windows work. So third-party software still has a market. But the upsell motion gets harder. How much of the upsold tool is built in-house as an add-on? How many systems does an internal team want to support versus just building the next thing themselves? The calculus has shifted.

What I am genuinely excited about is this: the barrier to building just dropped through the floor. That means we'll see an explosion of interesting companies built by people who understand their domain deeply but couldn't code before, or couldn't code fast enough to justify the time. The best AI applications will come from domain experts who know exactly what needs to exist, building with tools that let them create it in hours instead of months. The founders pitching me in two years will have built their first prototype in a weekend with Claude. Some of them will have built it because they were frustrated by the exact tools they were paying for.

That's exciting. That's the thing I keep coming back to.

A MESSAGE TO YOU

If you haven't done it yet, take $100 and start building something. Pick the task you can't stand doing — or the one you know you should do but never have the bandwidth for. For me, it was reading 150 blog posts a day. For you, it'll be something else. See where the journey takes you. The tools are good enough now, and they're getting better every week.

The system is running. There are briefs in my review queue right now. The iteration continues — every week, the knowledge base gets sharper, the scoring gets tighter, the sources expand. It's not finished, and it won't be.

"Rudie can't fail." — Joe Strummer